People always fear what they don’t understand. In a recent survey with AAA, 78% of U.S. drivers report feeling afraid to ride in an autonomous vehicle (AV). 54% would also feel less safe sharing the road with driverless cars.

Statistics with the series of unfortunate autonomous driving events in recent years suggest that AVs are far from being a mature technology. Adding the “fear of novelty”, public scrutiny for incidents, and a lack of AV understanding – no wonder people are wary of this new technology.

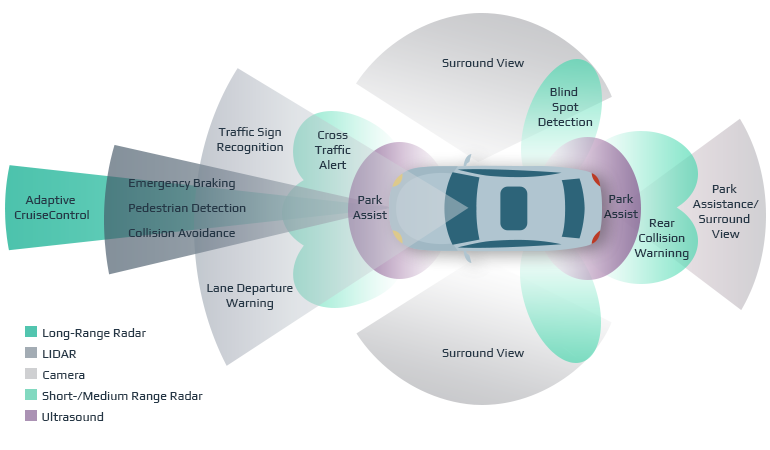

Nevertheless, the right combination of sensor fusion algorithms can bring autonomous driving to a new level of safety and help overcoming these fears. The fusion of five sensors outlined below, along with connectivity to our road infrastructure, will eliminate human error. Thereby, increasing safety and preventing road fatalities.

When five senses are better than one

Sensor fusion is the merging of data from multiple sensors to achieve an outcome that far exceeds using each sensor individually.

AVs need to perceive the environment with very high precision and accuracy, empowering data analytics solutions in automotive. This ensures a safe driving experience. The combination of sensors used to fuse the data at speeds of one gigabyte per second leaves drivers safe in the knowledge that their AV is fully prepared for all road scenarios.

Sensor fusion for autonomous driving has strength in aggregate numbers

All technology has its strengths and weaknesses. Individual sensors found in AVs would struggle to work as a standalone system. Fusing only the strengths of each sensor, creates high quality overlapping data patterns so the processed data will be as accurate as possible.

A fused sensor system combines the benefits of single sensors to construct a hypothesis about the state of the environment the vehicle is in.

Types of the most critical autonomous vehicles sensors

The best mix of sensors for autonomous driving safety include:

- Ultrasonic sensors – to detect obstacles in the immediate vicinity

- GPS – to calculate longitude, latitude, speed, and course

- Speed and Angle sensors – to measure speed and wheel rotation

- LIDAR – to allow obstacles to be correctly identified

- Camera – to detect, classify and determine the distance from objects

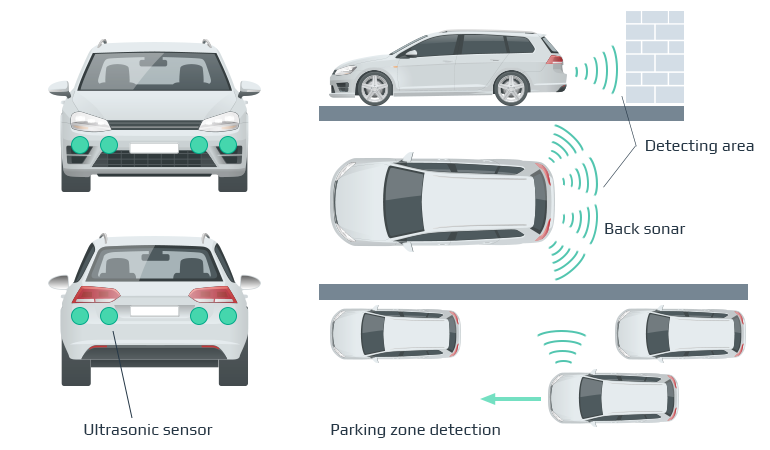

Ultrasonic sensors

Ultrasonic sensors can play an integral role to the safety of a driverless vehicle. They imitate the navigation process of bats, by using echo times from sound waves that bounce off nearby objects. Using this information, the sensors can identify how far away the objects are and alert the vehicles onboard system the closer it gets.

Utilizing sound waves with frequencies higher than that audible to the human ear, they are suitable for low speed, short to medium range applications, such as lateral moving, blind spot detection and parking.

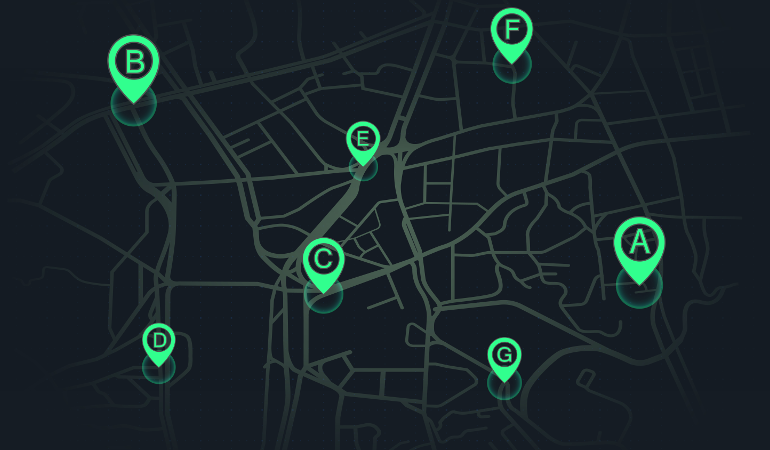

GPS sensors

Using the satellite-based navigation system, the GPS sensor receives geolocation and time information. Provided there is an unobstructed line of sight to four or more satellites, your location can be pinpointed to within a couple of meters.

Although essential for getting from point A to point B, alone it wouldn’t suffice due to weather conditions hampering its reliability. Combining the data with other sensor information, allows it to contribute a vital part in the synergy.

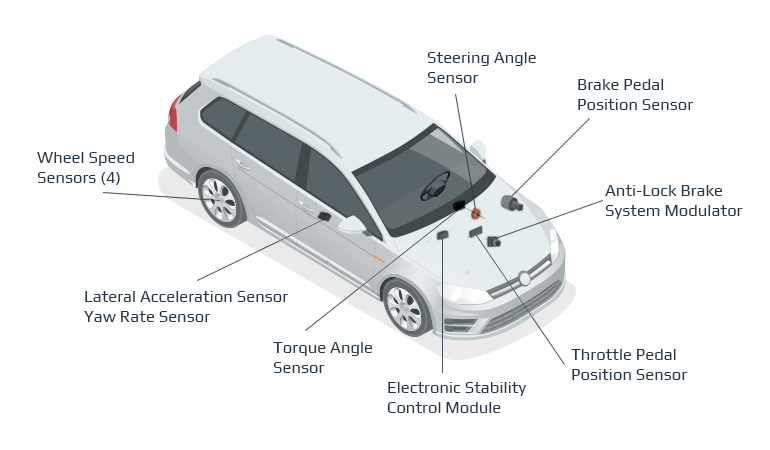

Speed and angle sensors

Speed sensors record the speed of the wheels by measuring acceleration in both the longitudinal and vertical axis and communicate this data to the driving safety system.

The steering angle sensor is used to determine where the front wheels are pointed. When combined with other data it is possible to measure the dynamics of the AV.

When geolocation is degraded or lost, such as when traveling through a tunnel, data from speed and angle sensors are combined to create a solution known as “Dead Reckoning.”

Dead Reckoning is the process of calculating one’s current position by using a previously determined position, or fix, and advancing that position based upon known or estimated speeds over elapsed time and course.

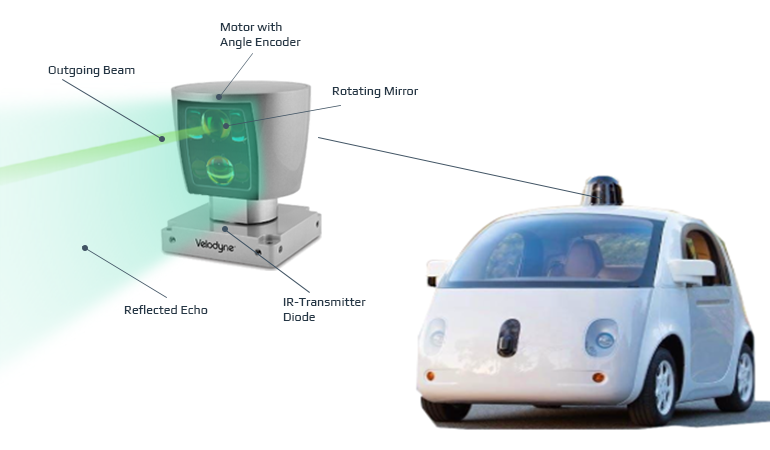

LIDAR sensors

LIDAR or Light Detection and Ranging sensors are possibly the most superior sensor used in AVs. Light waves from a laser beam emanate to identify surrounding objects, literally at the speed of light.

Differences in laser return times and wavelengths are used to create a digital 3D representation of the target surroundings and pinpoint your location to within a circle 4 inches in diameter. Using an array of single and multiple scan lines, and with a level of precision untouched by other sensors, it can even identify single drops of rain right down to single molecules.

Also capable of calculating distance, speed, and direction of the vehicle, this sensor complements and works with any existing safety features.

Cameras

An essential key to safe autonomous driving is the ability to accurately perceive and discriminate both stationery and moving obstacles in the environment.

A cost-effective solution for the self-driving car safety is to use camera sensors. Commonly used are rear and 360 degree cameras which provide images from the environment outside the vehicle.

Stereo vision is your typical 2-D and 3-D image that we can see with our own eyes. The data from these sensors allow the vehicle to accurately measure the distance from near and far away objects.

Infrared vision enhances that ability by picking up a thermal or heat signature to differentiate between humans and objects. Essential for noticing a difference in temperature on the road surface, alerting the AV to black ice.

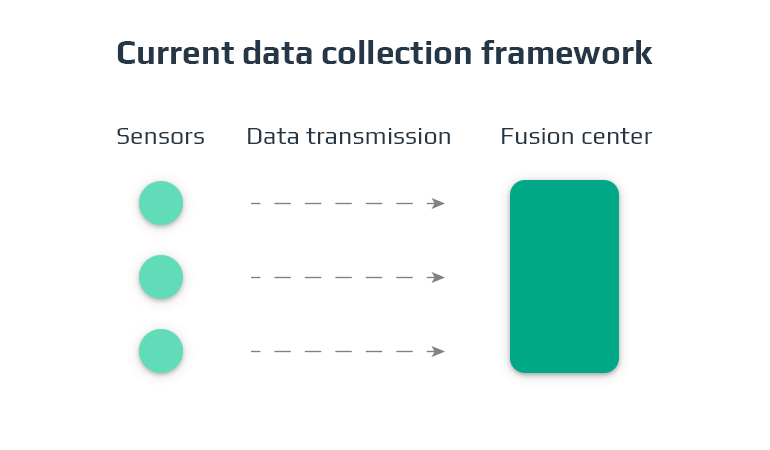

The importance of data collection and sensor fusion

Data from this combination of sensors are essential for safe autonomous driving. They take in the vehicles speed, direction, geolocation and distance from other objects, to a point where they reign supreme to human capabilities.

The information enters a telemetry process and is transmitted to receiving equipment, such as the onboard systems or a communication hub for monitoring. This exchange allows AVs to self-configure, predict and adapt to its environment with no human intervention.

The data is a goldmine for car manufacturers and service companies, who strive to build superior AVs and to train the next generation of machine learning algorithms.

Currently, the most advanced AV that is on the road uses 3 video cameras and some UV sensors. To have a fully automated system that ensures the safety of the passengers and other road users, a multi-sensor data fusion would be safer, faster, and more efficient.

Sensor fusion algorithms predict what happens next

To combine this data in a perfect sensor mix, we need to use sensor fusion algorithms to compute the information.

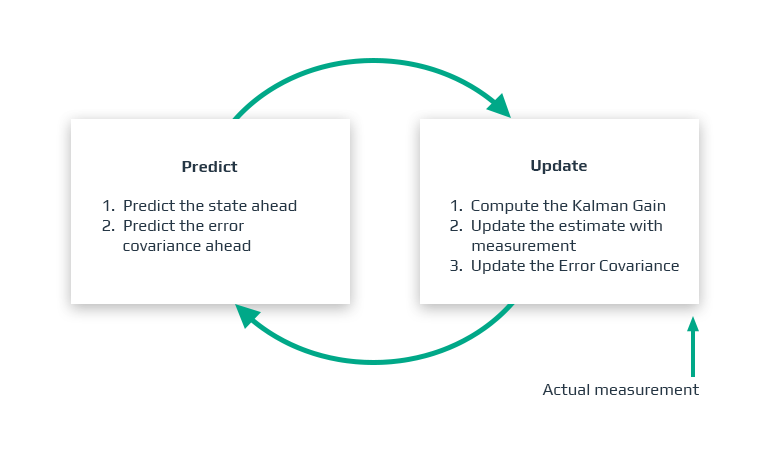

One example is known as a Kalman filter.

A Kalman filter can be used to predict the next set of actions the car or object ahead will take based on the data our vehicle receives from its sensors. Kalman filters rely on probability and a measurement update cycle to put together an understanding of the world.

The filter gathers sensor measurements, then update their calculations, then repeats the cycle indefinitely.

Once you have the calculations from the AV, you need to combine this data with smart and connected infrastructure, so the vehicle has better knowledge of its surroundings and what lies up ahead.

For instance, with the use of cameras the AV can recognise a stop sign, combine that with speed sensor data and know exactly when to apply the brakes.

Accidental deaths on the road are diminished

Today, the fusion of data from these 5 sensors in the form of sound waves, light waves, geolocation, vision and speed-based sensors, reduce the risk of accidental deaths substantially in human-driven vehicles. Advanced fusion sensors, developed for full automation of driverless cars, are already available for you to use now.

Safe in the knowledge that with real-time, split-second decisions, based on more senses than your own brain could ever develop, you can set your fears aside and embrace the emerging future of transportation.

A safer, faster, driverless world is coming. Are you ready?

Intellias have the knowledge and experience to create, collaborate and deliver solutions for automotive sensor fusion. Contact us to discover what we can do for you. Apart from building AI-powered sensor fusion solutions, we develop handwriting recognition software that becomes another step towards on-road safety.